Hello and welcome to another Burf Update, this one focuses on ROBOTS and the Apple Vision Pro. I know, you’re probably thinking, hang on they all feature robots? What’s different? Well at work, we received 2 Apple Vision Pros to play with, these $3500 devices are not in the UK yet, but the boss managed to get 2. These super cool devices allow you to do spatial computing (had a go, it really is a game changer).

So when they arrived, my company (Compsoft Creative) wanted to do something cool with them, so after a brief chat, we decided we would use them to control my Inmoov Robot.

So after a 3-day intense workshop trying to get the head/hand/finger data from the Apple Vision Pro (Good work Dave), to control the robot, we decided to take it to the next level!

So we are going to take the Inmoov robot to the Robotic and Automation Show at the NEC next week.

The Rush

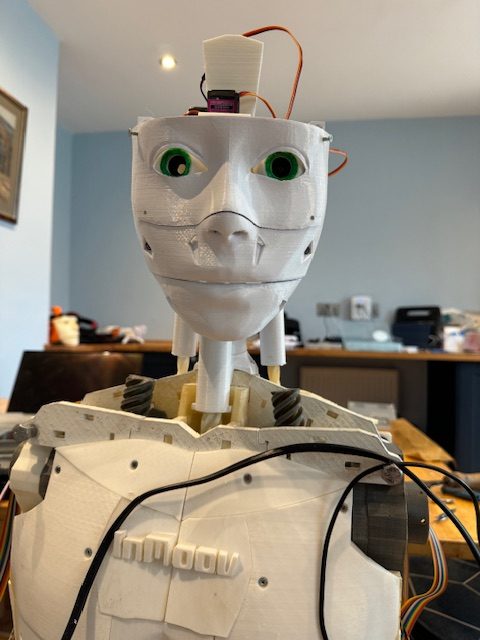

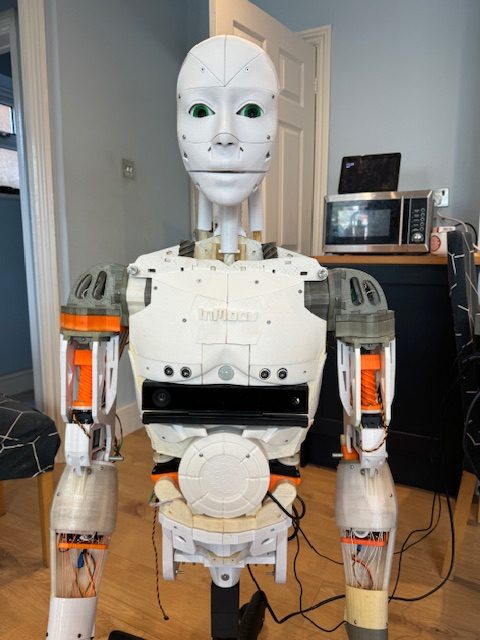

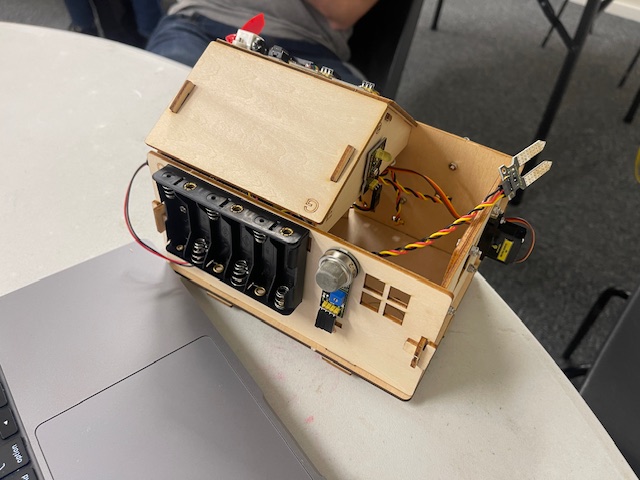

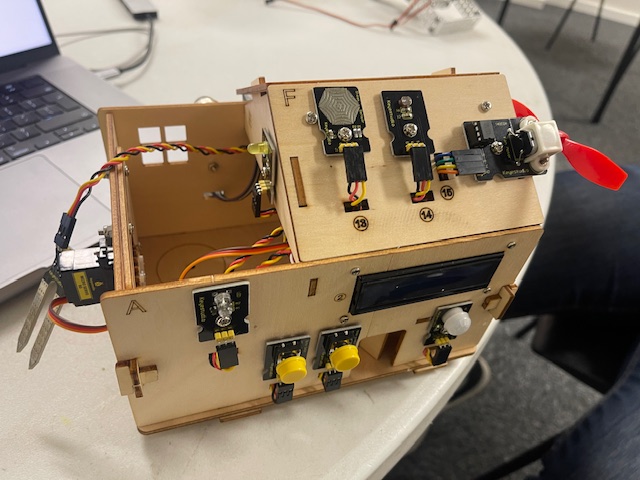

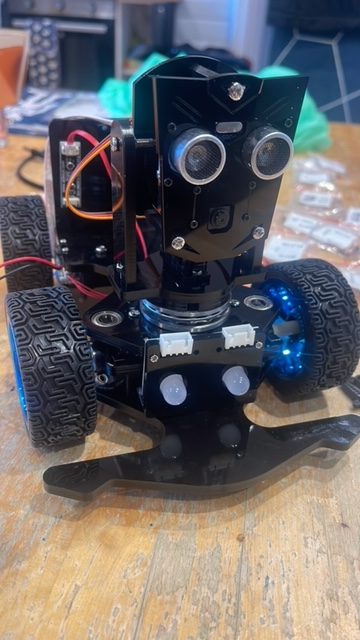

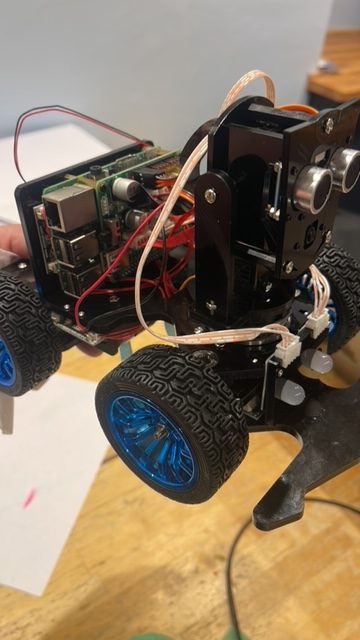

As you can see in the videos above, my Inmoov robot is a bit rough, it’s had a very hard life. So I decided it was time to make it look better before the show (which is in about 2 weeks). Work was kind enough to give me time to do it as they needed the robot for the show and there was a lot of work to do.

So what did I manage to do:

- New head with a new camera and built-in speaker

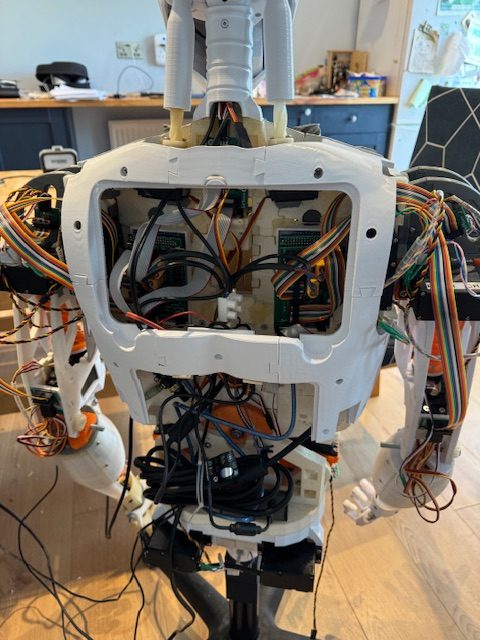

- Completely rewired the robot, and all connecting blocks removed

- New chest plates, sensors, etc

- New back plates

- Fingertips added

- Ears added (not sure about them)

- A new neck which is a lot stronger

- Printed a case for the power supply

- Setup a Compsoft profile in MyRobotLab (took hours to get this to work well)

One-off the list

So one of my goals for the year was to finish the Inmoov robot, and I can say pretty confidently that I have achieved this. If the robot stays in 1 piece for the show, I can also say it is classed as reliable.

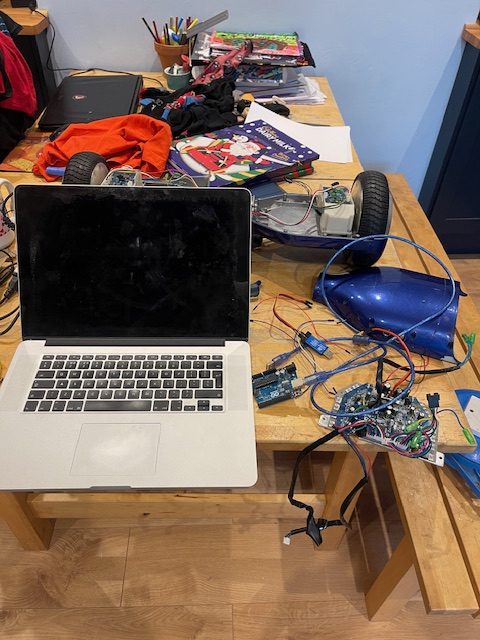

Anycubic Kobra 2 Max 3D printer

Bloody game-changer, that’s all I can say! So my work bought a 3D printer to help out with the printing needed to update the Inmoov robot because 3D printing is slow as hell. It takes hours/days to do anything large.

Not now it doesn’t, this 3D printer is at least 5-6 times faster than my old one. So 16h print takes 3 hours (standard speed, there is a sports mode!). Honestly, it’s the most epic exciting toy I have played with for ages. This opens up a lot of crazy ideas now.

Goals for the year

It’s always good to look back at the goals, let’s see if we can cross some off

This year’s Targets

Finish printing the 3D parts, make improvements to the bodyRebuild / Fix the head as the eyes do not function very wellLearn MyRobotLab (MRL)MRL: Be able to say some words, and it can perform a custom gesture- MRL: Be able to track or detect people using OpenCV

Plan to do a bit more on this - MRL: Be able to call a custom API

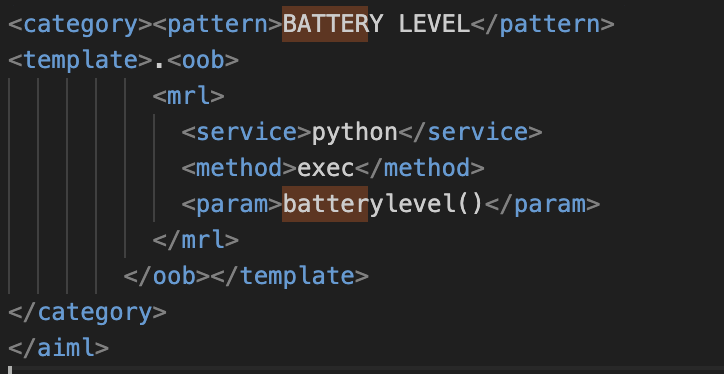

Plan to do a bit more on this - MRL: Learn more about AIML (there is a Udemy course)

Started watching the AIML course - Fire up the KinectV2 sensor that’s in the robot

Not done yet, however, Inmoov does not support this. Improve the wiring in the Inmoov robot as it is a mess

So over half the list has been done, 1 is no longer possible (Kinect) so I may replace that with something else. That is a great start for the year.

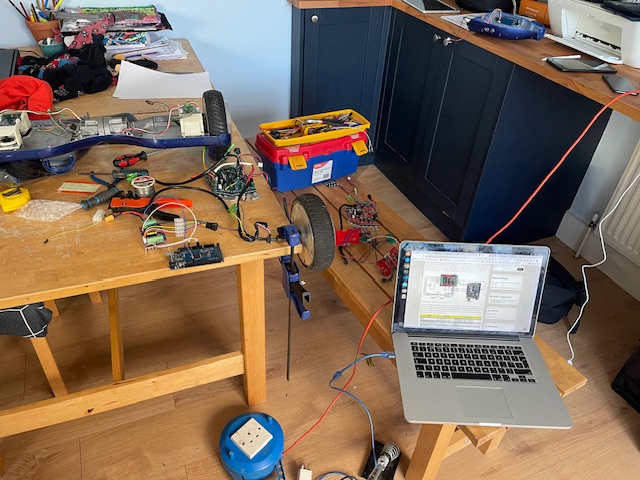

ROS2 mentorship

So I saw an offer on LinkedIn offering free mentorship on robotics and ROS. Of course, me being me, I applied for it and got accepted into the group. It has weekly sessions and lots of useful resources etc, however, due to me being super busy with the Inmoov robot, I am massively behind. I see this as a great way to kickstart me into ROS2, even if I can’t keep up.

Vehicles Update

So Jeff the Narava has been sold as I barely used it and it seemed a waste of money. Also, the Harley is up for sale for the same reasons. My dad gave me his 250cc scooter (well swapped for some LEGO) and my Gwiz is on the road and I am LOVING IT. The Gwiz AC version is so much fun. It’s now my main vehicle